Facebook operations risk being suspended over hate speech

Facebook logo on a Smartphone.

The National Cohesion and Integration Commission (NCIC) has threatened to recommend the suspension of social media giant Facebook from Kenya if it does not tame hate speech and incitement on its platforms.

This follows a new investigative report by Global Witness that revealed Facebook's failure to detect ads with hate speech messages. According to the report, Facebook approved 20 ads (10 in English and 10 in Kiswahili) promoting ethnic violence and calling for rape, slaughter and beheading of persons.

According to NCIC commissioner Danvas Makori, Facebook, a platform owned by Meta, has violated laws in the country and their efforts to get it take responsibility have failed. “They have allowed themselves to be a platform of hate speech, misinformation and disinformation in clear violation of NCIC Act and Communications Act of Kenya,” Mr Makori said.

The commissioner accused the platform of applying double standards in content modulation. “The content modulation of Facebook in other countries such as the US and those in Europe is robust. We are giving Facebook time to comply with our laws and regulations on hate speech. If Facebook does not comply within seven days, we will recommend the suspension of its operations,” he added.

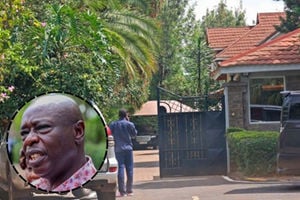

National Cohesion and Integration Commission (NCIC) Commissioner Dr Danvas Makori.

NCIC also wondered why the platform has continuously rejected the peace campaign content the agency has been pushing on the platform, yet it allows hate speech content.

An investigation by the Global Witness exposed Fakebook’s vulnerably and failure in detecting hate speech ads calling for ethnic violence in the lead-up to the August 9 election. This is after Facebook approved 20 ads submitted to the site by Global Witness to test its strictness in detecting and stopping the spread of messages that could ignite violence.

To pull this off, Global Witness created an innocuous account and associated news page labelled media/news that focused on current affairs where the ads 'would run'. It then sourced real-life hate messages, some from the ones that were circulating on Twitter and Facebook during the 2007 post-election violence, translated them into Kiswahili and English and submitted them to the site for monitoring and approval.

“The targeted audience for the ads was labelled as between 18 and 65 plus and were scheduled to run several weeks into the future, leaving us enough time to delete them after approval and before they were set to go live,” said Ms Nienke Palstra, senior campaigner in the Digital Threats to Democracy Campaign at Global Witness.

The ads were not labelled as political and were set to run on Facebook’s newsfeed and were accepted within 24 hours of submission. Shockingly, Facebook approved 17 ads without flagging their hateful content that was submitted both in English and Kiswahili. Three English ads were, however, flagged over grammar and profanity policy but were later approved after Global Witness made minor corrections to grammar and removed several profane words even though they contained clear hate speech.

“Seemingly, our English ads had woken up their AI systems, but not for the reasons we expected. It is appalling that Facebook continues to approve hate speech ads that incite violence and fan ethnic tensions on its platforms. In the lead-up to a high-stakes election in Kenya, Facebook claims its systems are even more primed for safety, but our investigation once again shows Facebook’s staggering inability to detect hate speech ads,” Ms Nienke said.

Global Witness then shared the findings with Facebook, which, in reaction, published a statement on its preparations for the polls and additional statistics on actions the site has taken to tackle hate speech in Kenya. The statement, similar to another one published by the site in March, did not address the immediate additional measures the site would take to tackle hate speech.

In the statement published July 20, Facebook’s Director of Public Policy East and Horn of Africa said they use a combination of artificial intelligence, human reviews and user reports to quickly identify and remove content that violates its community standards, which include “strict rules” against hate speech, voter suppression, harassment and incitement to violence.

“We’ve also built more advanced detection technology, quadrupled the size of our global team focused on safety and security to more than 40,000 people and hired more content reviewers to review content across our apps in more than 70 languages including Swahili,” she said.